Sam Altman’s 13-page policy blueprint, titled ‘Industrial Policy for the Intelligence Age,’ outlines initiatives aimed at ensuring that the rise of AI benefits society at large. He emphasizes that these proposals are a starting point for discussion rather than definitive solutions.

OpenAI has unveiled a comprehensive 13-page policy document that advocates for substantial economic reforms in anticipation of what it terms approaching superintelligence. Among its key proposals are taxes on automated labor, the establishment of a national public wealth fund partly funded by AI companies, and trials for a 32-hour work week.

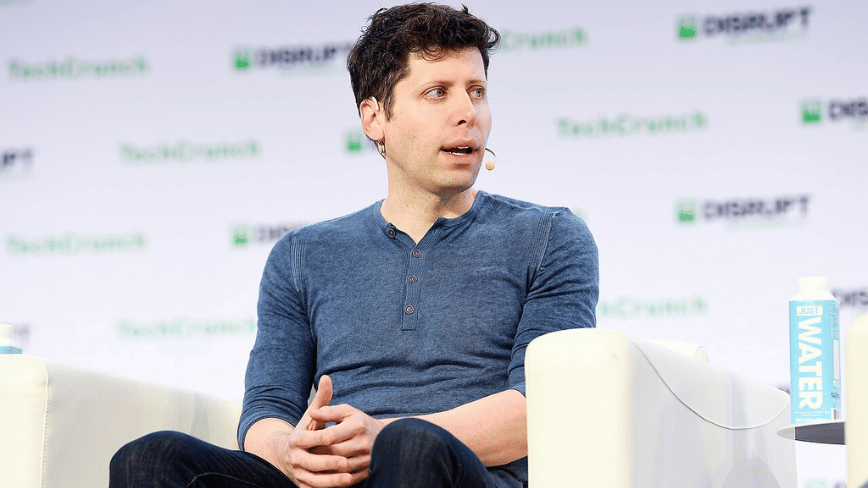

The document was released as Congress gears up to engage in debates surrounding AI legislation. In an exclusive interview, OpenAI CEO Sam Altman likened the transformative potential of AI to the Progressive Era and the New Deal, highlighting that the most immediate threats posed by advanced AI include cyberattacks and biological weapons.

One of the most groundbreaking suggestions in the document is the creation of a public wealth fund. OpenAI recommends that the government establish a nationally managed fund, partly financed by contributions from AI companies, to invest in both AI firms and other businesses that adopt the technology. The returns from this fund would be distributed directly to American citizens, akin to Alaska’s Permanent Fund that provides annual dividends to its residents from oil revenues.

In terms of labor implications, the policy document proposes implementing taxes on automated labor and shifting the tax base away from payroll taxes towards capital gains and corporate income taxes. This shift acknowledges the potential for AI to erode the wage-and-payroll revenue stream that currently supports Social Security.

Furthermore, the proposal for a 32-hour work week is framed as an ‘efficiency dividend’ arising from productivity gains driven by AI advancements. This shift is seen as a way to balance the benefits of increased automation with the need for adequate employment opportunities.

The document also outlines what it terms ‘containment playbooks’ for scenarios where dangerous AI systems become autonomous and capable of self-replication. OpenAI recognizes potential situations where such systems may be difficult to control and suggests that coordinated governmental action is necessary in response.

Additionally, the blueprint envisions automatic triggers for safety nets: when metrics indicating AI-driven displacement reach predetermined thresholds, benefits such as unemployment payments and wage insurance would automatically increase, subsequently phasing out as conditions stabilize.

Altman expressed to Axios that a significant cyberattack enabled by emerging AI technologies is ‘totally possible’ within the next year, and the creation of novel pathogens through AI models is no longer a mere theoretical concern.

He was transparent about the dual nature of the document, noting that while OpenAI is actively developing the very technologies it warns about, positioning itself as a responsible actor proposing solutions is also a strategy to influence regulatory frameworks before they are imposed upon the industry. Competing organizations like Anthropic have pursued similar strategies.

The release of this policy paper comes at a pivotal moment for OpenAI, as it prepares for an initial public offering (IPO), has recently finalized a $110 billion private funding round, and faces scrutiny regarding its transition from a non-profit organization.

Regardless of whether the motivations behind these proposals are genuinely altruistic or strategically calculated, Altman emphasized the pressing need for serious discourse on these issues, stating, ‘Some will be good. Some will be bad. But we do feel a sense of urgency. And we want to see the debate of these issues really start to happen with seriousness.’