About a week ago, the Commerce Department’s Center for AI Standards and Innovation (CAISI) announced a deal with the AI companies Microsoft, xAI, and Google that allowed the government to inspect unreleased AI models before they’re released to the general public. Anthropic and OpenAI signed something similar way back in 2024. But that excerpt had to be pulled from the Wayback Machine because that announcement is currently missing from the CAISI website. Reuters seems to have been the first to notice this, writing on Monday afternoon that using the original URL resolved to an error page that said “Sorry, we cannot find that page,” and then later, redirected to the main CAISI page on the Commerce Department website. As of this writing on Monday night, the URL is still a redirect to the CAISI page.

This unexpected removal has fueled speculation and concern among technology watchdogs and policy analysts. The agreements, announced with considerable fanfare on May 5, 2026, were touted as a key step in the government’s efforts to understand and mitigate risks associated with frontier AI models. The archived version of the announcement, which is still accessible via the Internet Archive, states that CAISI will conduct pre-deployment evaluations and targeted research to better assess frontier AI capabilities and advance the state of AI security. These agreements build on previously announced partnerships, which have been renegotiated to reflect CAISI’s directives from the secretary of commerce and America’s AI Action Plan.

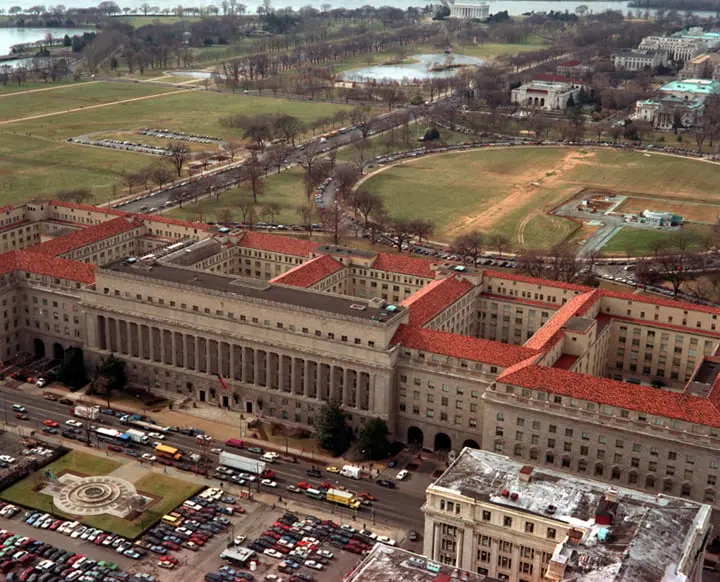

The disappearance of the official page raises immediate questions about transparency in AI governance. The United States has been struggling to establish a coherent federal strategy for overseeing rapidly advancing artificial intelligence. The creation of CAISI itself was part of a broader push to centralize AI safety efforts within the Department of Commerce, following the National Institute of Standards and Technology (NIST) AI Risk Management Framework. NIST has long played a central role in shaping technical standards for AI, but the new CAISI agreements signaled a more proactive, direct oversight role for the government, as opposed to relying solely on voluntary commitments from companies.

To understand the significance of these agreements, one must look at the history of AI safety collaborations between the U.S. government and major AI labs. In 2023, the Biden administration secured voluntary commitments from several leading AI companies, including OpenAI, Anthropic, Google, and Microsoft, to subject their models to external testing before public release. These commitments were part of a broader executive order on AI, which also directed NIST to develop testing standards. However, many critics argued that these voluntary measures lacked teeth and transparency. The subsequent establishment of CAISI in 2024 was seen as an attempt to institutionalize the testing process and ensure that evaluations were conducted by government experts, not just the companies themselves or third-party contractors.

The current agreements with Google DeepMind, Microsoft, and xAI represent an expansion of these efforts. xAI, founded by Elon Musk, has been a relatively new player in the frontier AI space but has quickly garnered attention for its ambitious models. Google DeepMind, a unit of Alphabet, is a long-time leader in AI research, while Microsoft has made deep investments in OpenAI and has its own AI initiatives. By bringing these three companies under formal agreements, CAISI was positioning itself to gain unprecedented access to the most advanced commercial AI systems before they reached the public. The archived announcement emphasized that these agreements support information-sharing and ensure a clear understanding in government of AI capabilities and the state of international AI competition.

The timing of the page removal is also notable. It occurred shortly after reports indicated that the Trump administration, which took office in January 2025, has been reassessing many of the previous administration’s AI policies. While the AI Action Plan referenced in the announcement is a Trump-era initiative, some elements of the Biden-era executive order have been scaled back or replaced. The removal of the CAISI page could be a routine administrative error, but it could also reflect policy shifts or internal disagreements about the transparency of these agreements. Since the announcement contained no classified information, its disappearance is puzzling to observers who have been tracking the evolution of U.S. AI policy.

Furthermore, the content of the agreements themselves may be subject to renegotiation or cancellation. The archived text refers to renegotiated partnerships based on the secretary of commerce’s directives, suggesting that the terms might have changed behind the scenes. If the agreements are indeed still in effect, why remove the public notice? This opacity undermines the stated goal of supporting information-sharing between the government and the public. Without an official announcement, journalists and researchers cannot verify what specific models are being tested, what safety criteria are being used, or how the results are being reported.

Reactions from the AI policy community have been swift. Many experts took to social media to express concern, with some drawing parallels to other instances of government information disappearing from public view. The AI Now Institute, a prominent advocacy group, issued a statement calling for the immediate restoration of the page and a full explanation from the Commerce Department. Others noted that even if the page removal was unintentional, it reflects a broader lack of institutional memory and commitment to transparency in AI governance. The fact that the only remaining record is a third-party crawl from the Wayback Machine highlights the fragility of government communications in the digital age.

From a technical standpoint, the removal could be due to a simple website restructuring. Government websites frequently undergo updates and reorganizations, and sometimes pages are inadvertently removed or broken during migrations. However, the fact that the URL initially showed a 404 error and then redirected to the main CAISI page suggests that the removal was intentional. A 404 error alone would indicate a broken link, but the redirect implies that the page was deliberately unpinned or taken offline. Without official comment, it is impossible to know whether this was a management decision or a technical glitch.

In the broader context, this incident raises important questions about the federal government’s ability to consistently communicate its AI policies. As the United States competes with China and the European Union in setting global AI standards, the reliability and accessibility of official information are crucial. The EU’s AI Act establishes a comprehensive regulatory framework with clear publication requirements, while China’s approach to AI governance is opaque but systematic. The U.S., by contrast, appears to be relying on a patchwork of announcements that can be easily removed or altered without explanation. This uncertainty erodes trust among industry stakeholders, international partners, and the public.

The implications for the specific companies involved are also significant. Google DeepMind, Microsoft, and xAI have all made public statements supporting responsible AI development. Yet their willingness to engage with CAISI may be contingent on the government maintaining clear lines of communication. If the government cannot even keep a press release online, how can companies be confident that the testing process will be stable and predictable? Some analysts worry that the removal could be a sign that the agreements are being scrapped or that the companies are pressuring the government to reduce transparency. Neither scenario bodes well for future collaboration.

It is worth noting that the Department of Commerce and the White House have not responded to requests for comment. Typically, such requests yield a “no comment” or a standard statement. The silence is deafening. As the news cycle moves on, the missing page may become a footnote in the broader narrative of U.S. AI policy. However, for those who follow the minutiae of government AI efforts, the incident is a stark reminder that public accountability is fragile. The Wayback Machine may be the only guarantor of memory in an era of digital ephemera.

Meanwhile, the three AI companies in question have not issued any statements about the missing page. Their silence suggests that they may not want to draw attention to the matter, perhaps because they are still engaged in negotiations or because they view the page removal as an internal government issue. Regardless, the public’s right to know how their data and safety are being handled by powerful AI systems is paramount. The agreements were supposed to inform the public about safeguards. Their removal from the official record does the opposite.

In the days and weeks ahead, it will be crucial to monitor whether the page is restored or replaced with a new announcement. If a revised version appears, it will be telling to compare the language and scope. Any changes could signal a shift in policy or a scaling back of commitments. If the page remains missing, pressure will mount on the Commerce Department to explain itself. Journalists and transparency advocates will likely file Freedom of Information Act requests to obtain the text of the agreements and the minutes of any meetings where the removal was decided.

The incident also underscores the importance of independent oversight of government AI activities. While CAISI is a relatively new body, its role in evaluating frontier models gives it enormous influence over which technologies reach the market. Without transparency, there is no way for outside experts to verify that the evaluations are thorough and unbiased. The missing page may be a small technical glitch, but it could also be a symptom of deeper problems in the architecture of U.S. AI governance.

Source: Gizmodo News